Every company today has heard the phrase: data is the new oil. And like oil, raw data has almost no value on its own. It needs to be extracted, cleaned, structured, and refined before it becomes useful. The companies that master this process gain a decisive competitive edge. The ones that don't are sitting on an untapped goldmine — and watching opportunities slip by.

Why So Many Companies Struggle

The gap between "having data" and "using data" is wider than most people think. In our experience working with companies across energy, utilities and industry, we see the same obstacles come up again and again:

No time. Data teams are already stretched. Cleaning and structuring data feels like plumbing work — unglamorous, time-consuming, and always pushed to next quarter.

High costs. Building a proper data infrastructure from scratch requires significant investment — in tools, talent, and time. Many companies don't know where to start, or underestimate the scope.

Legacy IT systems. Existing systems weren't designed with data sharing in mind. Extracting data from them, let alone combining it with other sources, can be an engineering nightmare.

Lack of in-house expertise. Knowing how to collect data is one thing. Knowing how to model it, version it, and turn it into reliable indicators is a different skill set entirely.

Messy, scattered, inconsistent data. Different teams use different formats. Different systems use different timestamps. A value that means one thing in one database means something else in another. Reconciling all of this takes time that nobody has.

A Real-World Example: EV Charging

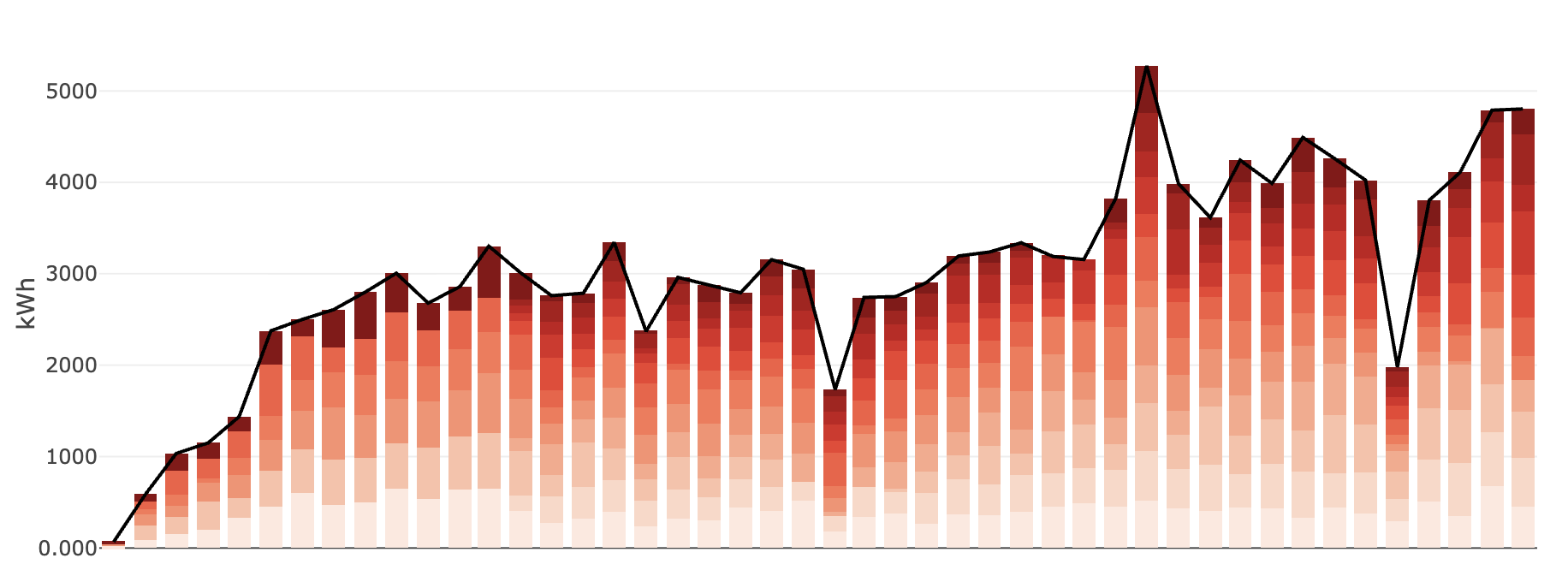

The EV charging sector is a perfect illustration of this challenge. As the industry scales rapidly, operators are managing networks of charging stations supplied by dozens of different hardware and software vendors — each delivering data in their own format, at their own frequency, with their own quirks.

Aggregating consumption data, availability metrics, and fault reports across all these sources into a single coherent view is not trivial. Without the right infrastructure, analysts spend most of their time wrangling data instead of analysing it. Reports are delayed. Decisions are made on incomplete information. Anomalies go undetected until they become costly problems.

The Real Cost of Doing Nothing

Poor data management is rarely visible on a balance sheet — until it is. A forecast built on stale data leads to bad procurement decisions. A pipeline that silently fails means your team is working with last week's numbers without knowing it. An indicator that means different things to different teams creates friction, mistrust, and duplicated work.

The cost is not just operational. It's strategic. Companies that can't trust their data can't move fast.

What Good Data Management Looks Like

When data infrastructure is done right, it becomes invisible — in the best possible way. Data flows automatically from sources to consumers. Every indicator is versioned and traceable: you can always see what the value was yesterday, last week, or last year, and understand exactly how it was computed. Anomalies are caught early. Analysts spend their time on analysis, not on data wrangling.

This is what we build at Pythonian with the Timeseries Refinery: a platform that makes time series data reliable, shareable, and actionable — without requiring a team of data engineers to maintain it.

Let's Talk

If your team is spending more time fighting with data than learning from it, that's a solvable problem. We've helped companies in energy, utilities, and industry take control of their data — and the results show up quickly.

Curious to know what that could look like for you?

Request a demo