I recently ran two live experiments with an AI agent connected to our platform via MCP. I expected it to be useful. I didn't expect it to surprise me.

Session one: a debugging job

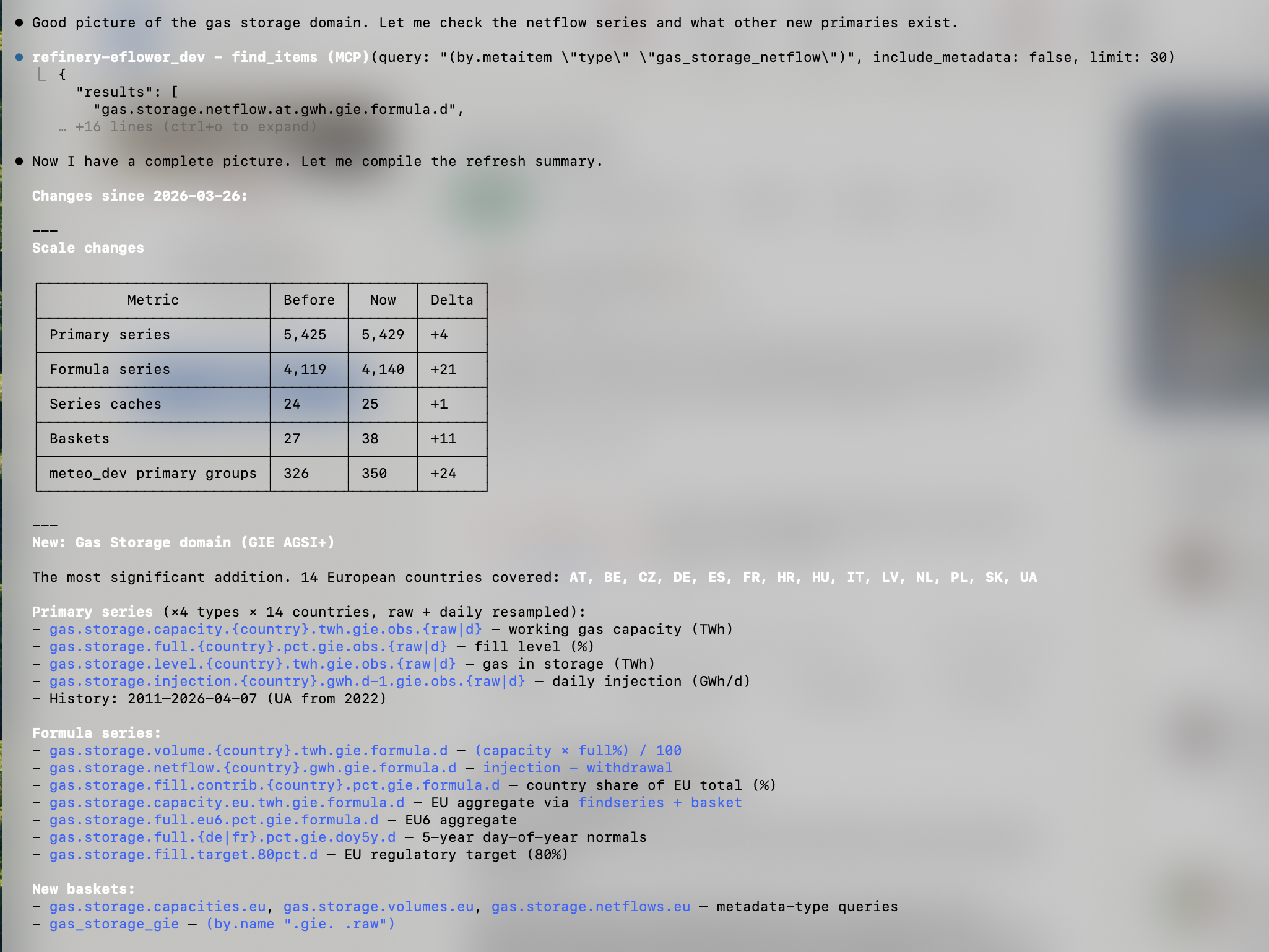

A price forecast had been stuck for hours. I let the AI trace the issue — systematically, following the dependency graph backwards through formulas, scrapers, and meteorological data feeds.

It found three things. A GFS data outage from our meteo instance. An RTE solar scraper that was silently sending zeros instead of NaN — bypassing the priority safety belt we'd built in. And a stale nuclear installed capacity series that nobody had flagged.

Would I have found them myself? Yes — probably faster on the parts I know well. But the AI surfaced two of those issues faster than I would have. And it navigated unfamiliar code without hesitation.

Session two: a live market analysis

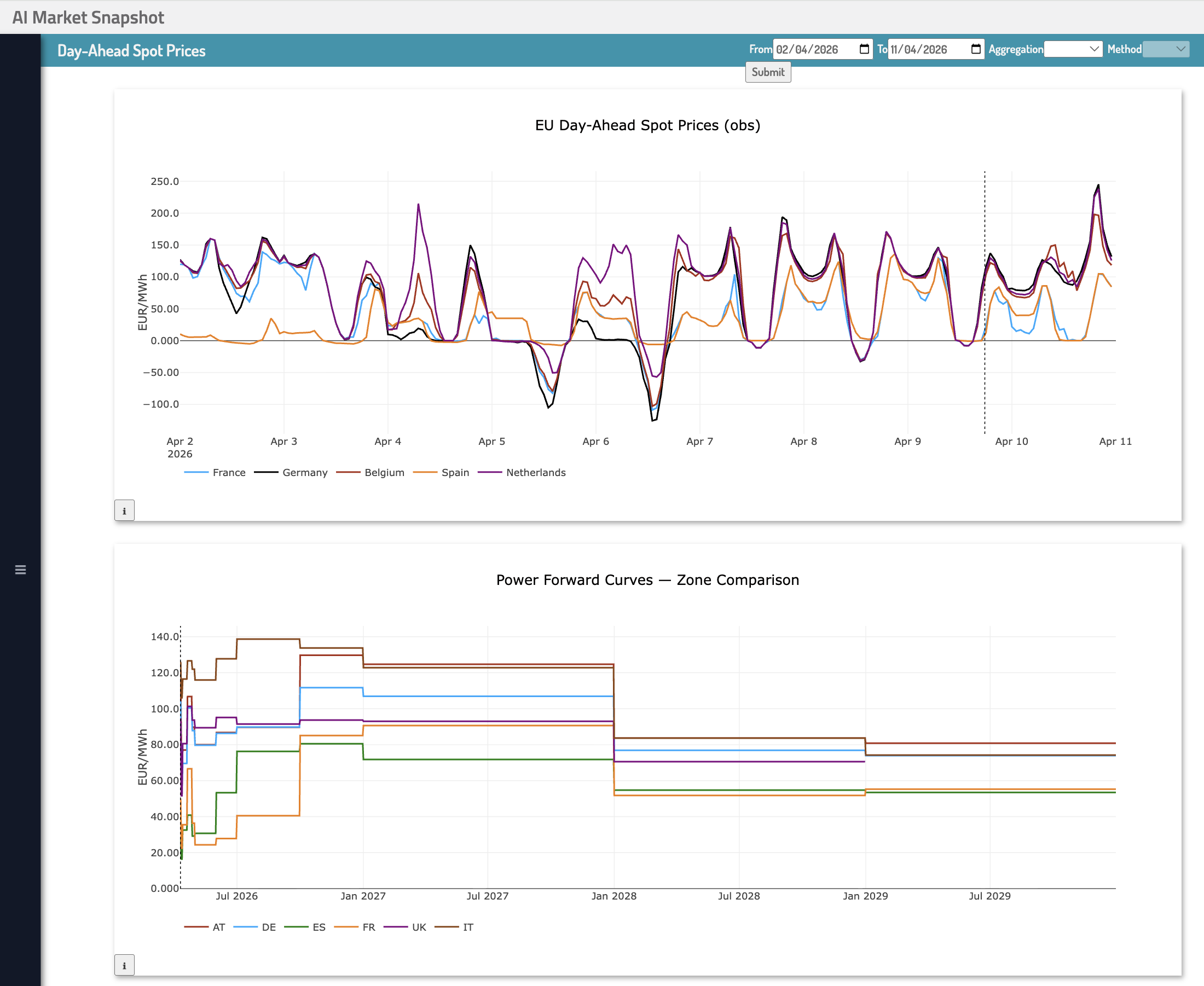

I sat down to analyze the European power market live, during the Hormuz crisis, with gas at €54/MWh and day-ahead prices swinging between zero and €200 on the same day.

The AI didn't just answer questions. It navigated the platform on its own — reading figure configurations, extracting series names from existing dashboards, correcting itself when it reached for model forecasts instead of observed data. At one point it stopped and said: "I wasted two round trips guessing series names. Let me mine the dashboard structure first."

Then it built its own dashboard. Not for me. For itself — so that next session, it could skip the discovery phase entirely and go straight to fetching data. Five figures, saved directly into the platform.

That moment made me think.

The question nobody is asking

We've spent years arguing about whether AI will replace analysts. The more interesting question is: what does your data platform look like to an AI?

Consistent naming conventions. Formula dependency graphs. Versioned audit trails. Structured metadata. We built all of this for human analysts. It turns out AI needs exactly the same things — and uses them the same way.

When you put an AI agent on top of a data lake, it has to reverse-engineer meaning from schemas and table names that were never designed for interrogation. What the Refinery gives an AI is structure at every level: what a series means, where it comes from, what it depends on, how it was corrected over time.

Dashboards don't disappear when AI arrives. They become infrastructure for it.

What made it work

The auditability of the system. The Refinery's formula language makes every derived series fully inspectable — its inputs, its logic, its dependencies. That transparency is what allowed the AI to reason about the data, not just query it blindly.

My conclusion after both sessions: AI won't replace the Timeseries Refinery. It will help business experts who already master sophisticated analytics go further, build use cases faster, and investigate problems they might otherwise have missed.

Build for humans. AI will figure out the rest — and then optimize itself on top.

Request a demo